Machine learning is a type of Artificial Intelligence that helps software learn how to make predictions without being specifically told what to do. It uses past information to figure out what might happen in the future.

- Why Is Machine Learning Important?

- A Brief History of Machine Learning

- Difference Between Machine Learning, Artificial Intelligence, and Deep Learning

- What Are The Different Types of Machine Learning?

- Advantages and Disadvantages of Machine Learning

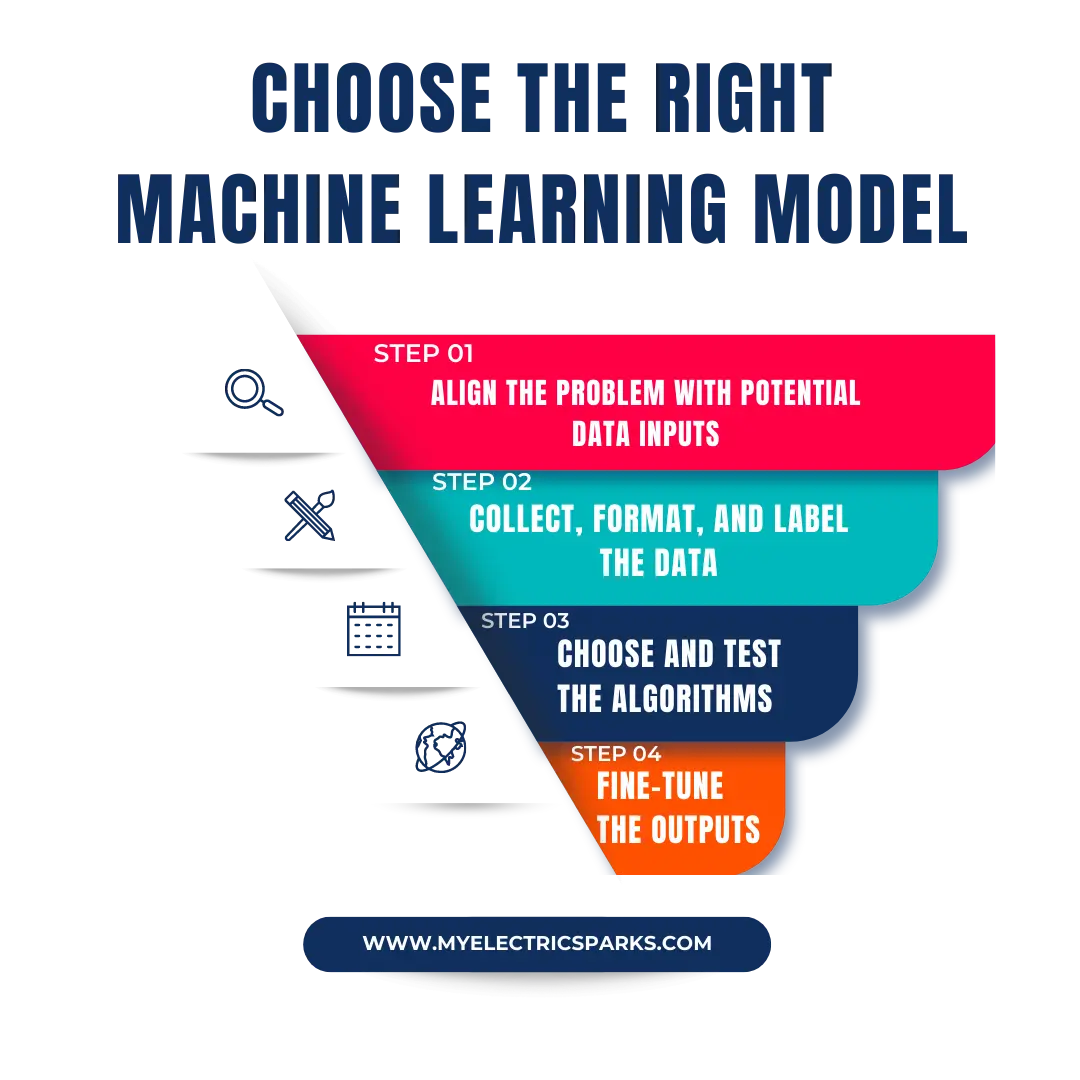

- How To Choose The Right Machine Learning Model?

- Step 1: Align the problem with potential data inputs

- Step 2: Collect, format, and label the data

- Step 3: Choose and test the algorithms

- Step 4: Fine-tune the outputs

- Understanding The Significance of Human-Interpretable Machine Learning

- Applications of Machine Learning

- 1. Facial recognition/Image recognition

- 2. Automatic Speech Recognition

- 3. Financial Services

- 4. Marketing and Sales

- 5. Healthcare

- Future of Machine Learning

- FAQs of Machine Learning

- How do I get started with machine learning?

- How is machine learning used in business and industry?

- What are the advantages of using machine learning in data analysis?

- What is machine learning in Python?

- What is machine learning in data Science?

- What is machine learning in AI?

- What programming languages are used for machine learning?

- What are some common machine learning algorithms?

- How is machine learning applied in natural language processing?

Machine learning is artificial intelligence that helps machines learn to make decisions like humans. It allows machines to learn and develop their programs without needing humans to tell them what to do. This learning process is done by feeding good-quality data to the device and using different algorithms to build models that help the machine learn from that data.

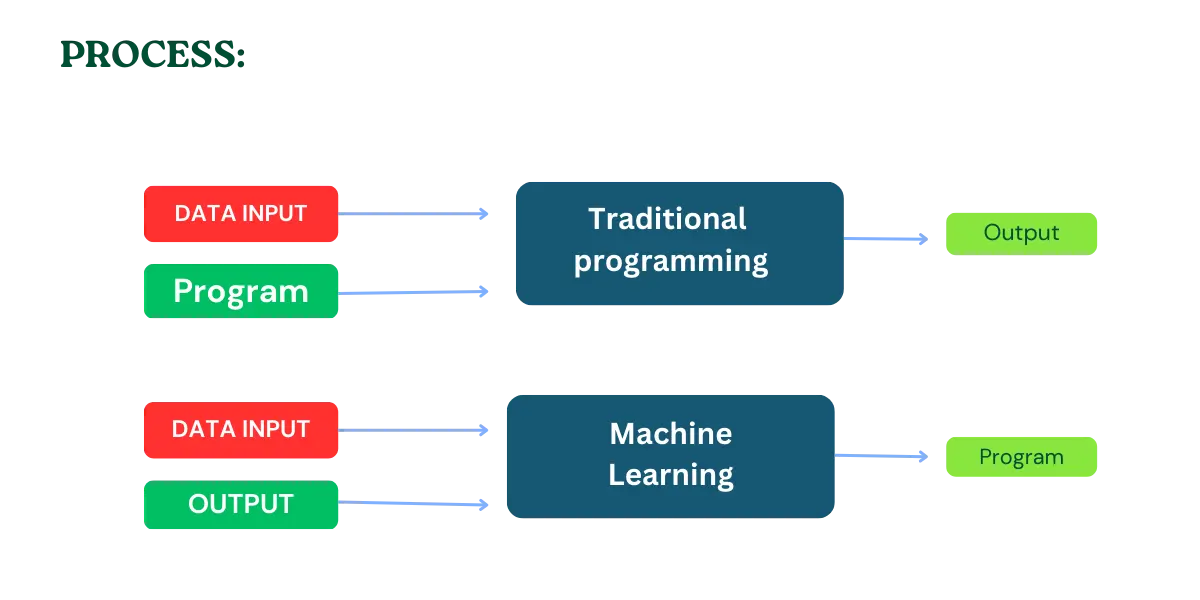

The difference between machine learning and traditional programming is that in conventional programming, a pre-written program is fed to the machine along with input data to generate output. In machine learning, input data and work are provided to the device during the learning phase to create a program for itself.

If you want to automate any program using machine learning, choose the correct algorithm based on your data type. The more the machine learns, the better it makes the best predictions.

To Understand it better, here is the infographic that will help you understand how it works:

Why Is Machine Learning Important?

Machine learning is crucial in providing enterprises with valuable insights into customer behavior and business operations, enabling them to identify patterns and develop innovative products.

Some of the most successful companies in the world, including Facebook, Google, and Microsoft, have made machine learning an integral part of their operations. This has helped these companies gain a competitive edge and stay ahead. Therefore, incorporating machine learning into business strategies can be a game-changer for companies seeking to improve performance and remain relevant in today’s highly competitive market.

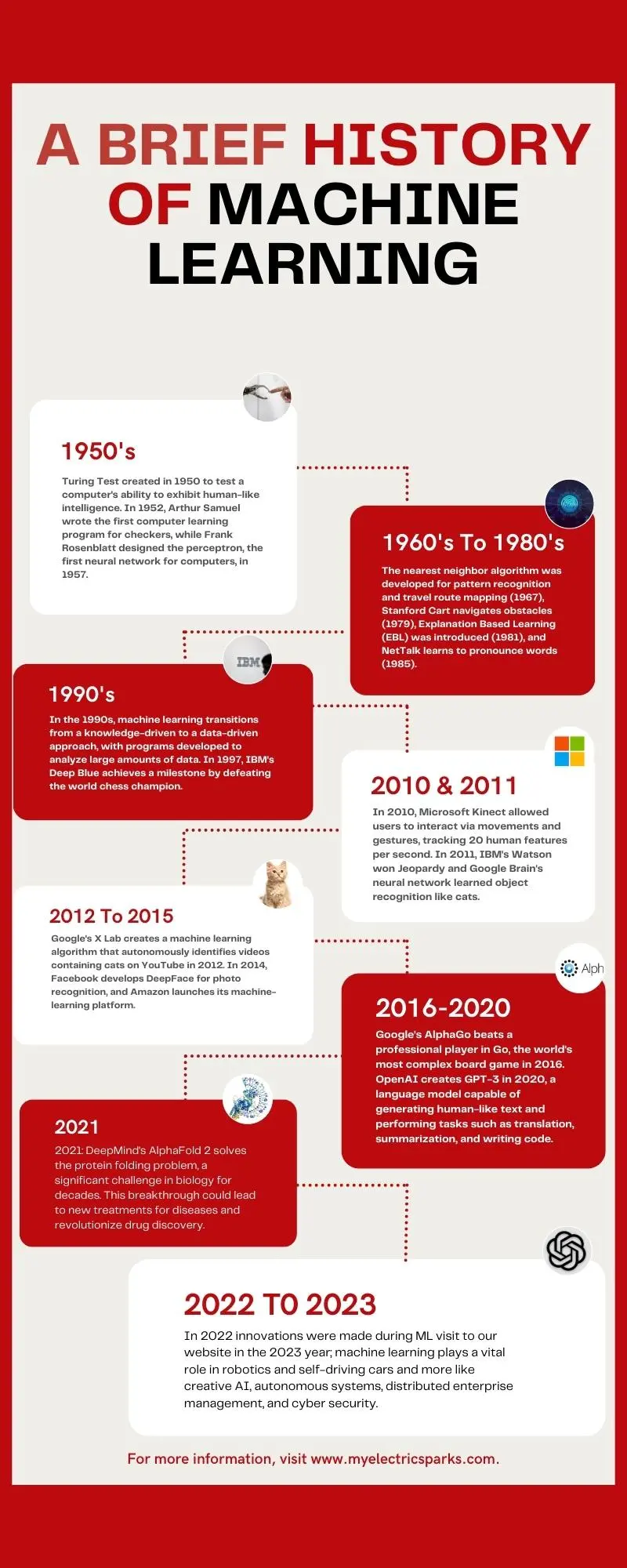

A Brief History of Machine Learning

Here is the year-by-year break of how machine learning is becoming popular.

1950: Alan Turing creates the Turing Test to determine a computer’s ability to exhibit intelligent behavior equivalent to, or indistinguishable from, that of a human.

1952: Arthur Samuel writes the first computer learning program for the game of checkers, which studies winning strategies and incorporates them into its program.

1957: Frank Rosenblatt designs the perceptron, the first neural network for computers that simulates the thought processes of the human brain.

1967: The nearest neighbor algorithm is developed, allowing computers to begin using essential pattern recognition. This leads to the development of algorithms for mapping travel routes.

1979: Stanford University students invented the Stanford Cart, which can independently navigate obstacles in a room.

1981: Gerald Dejong introduces Explanation Learning (EBL), where a computer analyzes training data and creates a general rule it can follow by discarding unimportant data.

1985: Terry Sejnowski invents NetTalk, which learns to pronounce words as a baby does.

The 1990s: Machine learning shifts from a knowledge-driven to a data-driven approach. Scientists develop computer programs to analyze large amounts of data and draw conclusions.

1997: IBM’s Deep Blue beats the world champion at chess.

2006: Geoffrey Hinton coins “deep learning” to explain new algorithms that let computers “see” and distinguish objects and text in images and videos.

2010: The Microsoft Kinect can track 20 human features at a rate of 30 times per second, allowing people to interact with the computer via movements and gestures.

2011: IBM’s Watson beats its human competitors at Jeopardy. Also, Google Brain is developed, and its deep neural network can learn to discover and categorize objects as a cat does.

2012: Google’s X Lab develops a machine learning algorithm that autonomously browses YouTube videos to identify videos containing cats.

2014: Facebook developed DeepFace, a software algorithm that recognizes or verifies individuals in photos to the same level as humans.

2015: Amazon launches its machine learning platform, and Microsoft creates the Distributed Machine Learning Toolkit, which enables efficient distribution of machine learning problems across multiple computers.

2016: Google’s AlphaGo algorithm beats a professional player in the game of Go, considered the world’s most complex board game.

2020: Researchers at OpenAI create GPT-3, a language model that can generate human-like text and perform tasks such as translation, summarization, and writing code.

2021: DeepMind’s AlphaFold 2 solves the protein folding problem, a significant challenge in biology for decades. This breakthrough could lead to new treatments for diseases and revolutionize drug discovery.

2022: This year, Machine learning has made massive innovations that include:

- Tiny ML: Efficient deep learning for small hardware.

- Quantum ML: Advanced research with quantum computing and ML.

- Auto ML: Accessible solutions for ML projects.

- MLOps: Automated and reliable data management for businesses.

- Full Stack Deep Learning: Efficient ML model training and AI system deployment.

2023: In this year, machine learning plays a vital role in robotics and self-driving cars and more like creative AI, autonomous systems, distributed enterprise management, and cyber security.

Difference Between Machine Learning, Artificial Intelligence, and Deep Learning

Machine learning, artificial intelligence (AI), and deep learning are related concepts but are not interchangeable. Here are the key differences between these three terms:

| Concept | Definition | Example |

|---|---|---|

| Machine learning | Subset of AI where algorithms are trained on data to make predictions or decisions without being explicitly programmed | Predicting whether a customer will churn based on their purchase history |

| Artificial intelligence | Broad concept that encompasses machine learning and other techniques, where machines are programmed to perform tasks that typically require human intelligence | Autonomous vehicles that can make decisions based on real-time data |

| Deep learning | Subset of machine learning that uses artificial neural networks with multiple layers to learn from data | Image recognition systems that can identify objects in pictures |

Machine learning is a subset of AI that focuses on learning from data. In contrast, deep learning is a subset of machine learning that uses artificial neural networks with multiple layers to learn from data. AI encompasses both machine learning and other techniques for creating intelligent systems.

What Are The Different Types of Machine Learning?

Regarding classical machine learning, algorithms are categorized based on their approach to improving prediction accuracy.

There are four main types:

- supervised learning

- unsupervised learning

- semi-supervised learning

- reinforcement learning

The algorithm choice depends on the data type scientists aim to predict. Learn more about these approaches and their applications in machine learning.

Supervised Learning

Supervised learning is a problem-solving method that uses a model to understand the relationship between input and target variables. In supervised learning tasks, the training data comprises information about input variables and their corresponding target variable.

The input variable is represented as “x,” while the target variable is defined as “y.” A supervised learning algorithm attempts to learn a hypothetical function, described as “y=f(x),” which maps inputs to outputs.

The learning process is monitored or supervised because we already know the output, and the algorithm is corrected each time it predicts to optimize the results.

The model is trained on the training data, consisting of input and output variables. Once trained, the model can predict new, unseen test data.

During the test phase, only the input variables are provided, and the models’ expected outputs are compared with the target variables to estimate the model’s performance.

There are two types of supervised learning problems: classification and regression.

The category involves predicting a class label, while regression predicts a numerical value.

An example of a classification task is the MINST handwritten digits dataset. This dataset includes images of handwritten digits as inputs and assigns them a class label, identifying the numbers from 0 to 9 into different classes.

The Boston house-price dataset is an example of a regression problem. This dataset includes features of houses as inputs and assigns them a numerical value as the target variable, representing the price of a home in dollars.

Here is the table that summarizes all the information:

| Supervised Learning | Represented as “y.” | Types | Examples |

|---|---|---|---|

| Supervised Learning | A type of problem-solving method that involves using a model to understand the relationship between input and target variables | Classification and Regression | MINST handwritten digits dataset for classification and Boston house price dataset for regression |

| Input Variables | Represented as “x” | – | Images of handwritten digits for classification and features of houses for regression |

| Target Variables | Monitored or supervised process, where the algorithm is corrected each time it predicts to optimize results | – | Unseen data is used to evaluate the performance of the trained model, where only input variables are provided, and outputs predicted by the model are compared with target variables. |

| Hypothetical Function | A function that maps inputs to outputs, represented as “y=f(x)” | – | – |

| Learning Process | Unseen data is used to evaluate the performance of the trained model, where only input variables are provided, and outputs predicted by the model are compared with target variables | – | – |

| Training Data | Data used to train the model, consisting of both input and output variables | – | – |

| Test Data | Unseen data is used to evaluate the performance of the trained model, where only input variables are provided, and outputs predicted by the model are compared with target variables. | – | – |

Unsupervised Learning

In unsupervised learning, the model aims to learn independently by identifying patterns and relationships among data without guidance from a supervisor or teacher.

This type of learning only operates on input variables without any target variables to direct the learning process.

The objective of unsupervised learning is to uncover underlying patterns in the data to understand the data better.

There are two primary categories of unsupervised learning: clustering and density estimation. Clustering involves identifying different groups in the data, while density estimation aims to consolidate the data distribution.

These techniques are used to discover patterns within the data. Visualization and projection can also be considered forms of unsupervised learning, as they provide insight into the data.

Visualization involves creating plots and graphs to represent the data, while projection focuses on reducing the dimensionality of the data.

| Type of Unsupervised Learning | Description |

|---|---|

| Clustering | Identifies different groups in the data |

| Density Estimation | Consolidates the distribution of the data |

| Visualization | Creates plots and graphs to represent the data |

| Projection | Reduces the dimensionality of the data |

This table summarizes the two primary categories of unsupervised learning (clustering and density estimation), two additional forms of unsupervised learning (visualization and projection) described in the text, and briefly describes each.

Reinforcement Learning

Reinforcement learning is a type of problem where an agent operates in an environment and receives feedback or rewards from the atmosphere based on its actions.

These rewards can be positive or negative, and the agent adjusts behavior accordingly. Unlike supervised learning, there is no fixed training dataset, and the machine learns independently.

An example of a reinforcement problem is playing a game where the agent aims to achieve a high score.

The agent makes moves in the game based on the feedback received from the environment through rewards or penalties. Reinforcement learning has shown impressive results in applications such as Google’s AlphaGo, which defeated the world’s top-ranked Go player.

Here’s an example of a table that summarizes the key points of the text:

| Type of Learning | Description |

|---|---|

| Reinforcement Learning | They are playing a game where the agent aims to score high. |

| Training Dataset | Unlike supervised learning, there is no fixed training dataset for the machine. |

| Examples | Playing a game where the agent aims to achieve a high score. |

| Results | An agent operates in an environment and receives feedback or rewards from the atmosphere based on its actions. |

Semi-Supervised Learning

Semi-supervised learning is a type of machine learning where an algorithm is trained on a small amount of labeled data and then uses this knowledge to categorize new, unlabeled data.

This method balances the accuracy of supervised learning and the efficiency of unsupervised learning. Although supervised learning algorithms perform better with labeled data, labeling can be time-consuming and costly.

Semi-supervised learning is helpful in various areas, such as machine translation, where an algorithm can be trained to translate languages with incomplete dictionaries, and fraud detection, where only a few positive examples are available for training. This method can also be used to label data automatically, as algorithms trained on small datasets can learn to apply data labels to more extensive sets with high accuracy.

Advantages and Disadvantages of Machine Learning

Machine learning is a rapidly evolving field that has found applications in a wide range of domains. From predicting customer behavior to forming the operating system for self-driving cars, ML has transformed how we interact with technology.

While ML brings numerous benefits, it is essential to acknowledge that it also has some drawbacks. This section will explore some of this powerful technology’s key advantages and disadvantages.

Advantages:

Machine learning can provide a deeper understanding of customers by analyzing their data and behaviors over time.

By learning associations, machine learning can help tailor product development and marketing initiatives to customer demand.

Machine learning can be a primary driver in business models, as seen in companies like Uber and Google.

Uber uses machine learning algorithms to match drivers with riders, while Google uses them to surface relevant search ads.

Machine learning algorithms can continuously learn and improve over time, making them ideal for tasks that require ongoing optimization.

Disadvantages:

Machine learning projects can be expensive due to the high salaries commanded by data scientists and the cost of software infrastructure.

Machine learning algorithms can be biased if trained on data sets that exclude specific populations or contain errors.

Biased models can lead to inaccurate representations of the world, resulting in regulatory and reputational harm for enterprises that rely on them.

Bias in machine learning can also lead to discrimination, a serious concern that can negatively impact individuals and society.

How To Choose The Right Machine Learning Model?

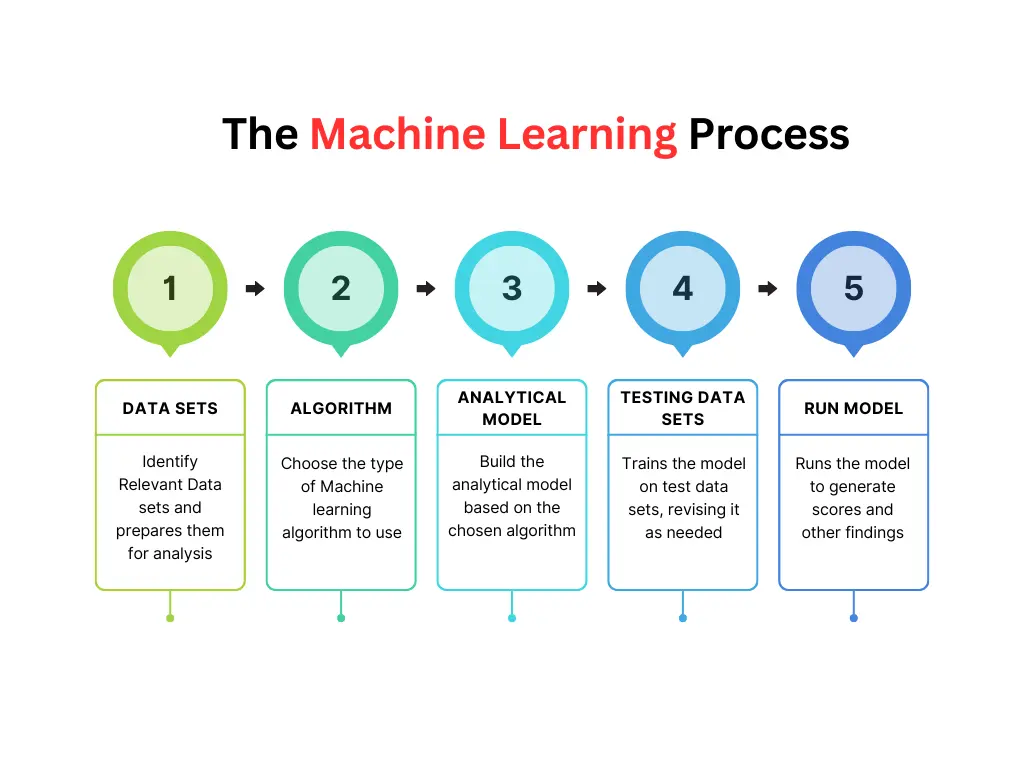

When choosing a suitable machine learning model to solve a problem, it’s essential to approach the process strategically to avoid wasting time and resources. Here are the key steps to follow:

Step 1: Align the problem with potential data inputs

Start by aligning the problem you want to solve with the possible data inputs that could be used to find a solution. This step requires collaboration between data scientists and experts who deeply understand the problem you are trying to solve.

Step 2: Collect, format, and label the data

Once you have identified the potential data inputs, the next step is to collect the data, format it, and label it if necessary. Data scientists usually lead this process with assistance from data wranglers.

Step 3: Choose and test the algorithms

After you have collected and prepared the data, it’s time to choose which algorithm(s) to use and test their performance. Data scientists with the expertise to select the best algorithm for the problem usually do this step.

Step 4: Fine-tune the outputs

Finally, continue to fine-tune the outputs until they reach an acceptable level of accuracy. Data scientists typically carry out this step, work with experts to get feedback, and ensure the model meets the specific needs of the problem being solved.

By following these key steps, you can ensure that you are approaching the process of choosing a machine-learning model strategically and increasing the likelihood of finding an effective solution to your problem.

Understanding The Significance of Human-Interpretable Machine Learning

When explaining how a specific ML model works, things can get tricky if the model is complex. In specific vertical industries, such as banking and insurance, data scientists must use simple machine-learning models that can be easily explained.

This is particularly important because these industries have heavy compliance burdens, and every decision must be justified and explained.

While complex models may provide accurate predictions, communicating how the output was determined to a non-expert can be challenging.

Applications of Machine Learning

Machine learning algorithms are essential for developing intelligent systems that learn from historical data and past experiences to deliver precise outcomes.

With the rising popularity of ML solutions, many industries are leveraging this technology to solve complex business problems and create innovative products and services.

From healthcare and defense to finance, marketing, and security, ML is transforming various industries.

1. Facial recognition/Image recognition

Facial recognition is one of the most widely used applications of artificial intelligence. For instance, iPhones use facial recognition technology to unlock the device.

This technology has several use cases, particularly in security, such as identifying criminals, searching for missing persons, and aiding forensic investigations.

Facial recognition technology can also be used for intelligent marketing, diagnosing diseases, tracking school attendance, and many other purposes.

2. Automatic Speech Recognition

ASR, which stands for automatic speech recognition, is a technology that can convert spoken words into digital text. This technology has various uses, such as verifying the identity of users through their voice and performing actions based on voice commands.

Speech patterns and vocabulary are inputted into the system to train the ASR model. Nowadays, ASR systems have a vast range of applications in several fields.

Here are the following:

3. Financial Services

In Financial Services, Machine Learning has numerous practical applications. One of these is using Machine Learning algorithms to detect fraud. In the recent discovery,, machine learning algorithms are used for Anti-Money Laundering.

This is achieved by monitoring each user’s activities and assessing whether an attempted activity is typical of that user. Machine Learning can also be used for financial monitoring to detect money laundering activities, a crucial security use case.

Machine Learning is also beneficial for making better trading decisions using algorithms that can simultaneously analyze multiple data sources. Other applications of Machine Learning in Financial Services include credit scoring and underwriting. Moreover, virtual personal assistants like Siri and Alexa are our most common daily applications.

4. Marketing and Sales

Businesses are utilizing Machine Learning to improve their lead-scoring algorithms by considering various parameters such as website visits, opened emails, downloads, and clicks.

This method provides a more accurate score for each lead. Additionally, Machine Learning techniques, such as regression, help companies enhance their dynamic pricing models by making predictions.

Another crucial application of Machine Learning is Sentiment Analysis. This method helps to determine consumer responses to a particular product or marketing initiative.

For identifying their products in online images and videos, brands use Machine Learning for Computer Vision.

Computer Vision is also helpful in measuring the mentions that miss any relevant text. Chatbots are also becoming more responsive and intelligent due to Machine Learning.

5. Healthcare

Machine Learning has several crucial applications as it transforms healthcare with its potential to predict and prevent diseases. Additionally, Radiotherapy is becoming more advanced and effective due to the incorporation of Machine Learning.

Early-stage drug discovery is another critical application of Machine Learning in the medical field. This process involves precision medicine and next-generation sequencing technologies. Clinical trials can be costly and time-consuming, but Machine Learning-based predictive analytics could help improve these factors and deliver better results.

Furthermore, Machine Learning technologies are critical in predicting outbreaks. Scientists worldwide are using Machine Learning technologies to predict epidemic outbreaks.

Future of Machine Learning

Machine learning algorithms have existed for decades, but their popularity has grown exponentially with the rise of artificial intelligence. In particular, deep learning models have revolutionized today’s most advanced AI applications.

Machine learning platforms are among the most competitive in enterprise technology. Major vendors like Amazon, Google, Microsoft, IBM, and others are racing to offer customers platform services encompassing the full spectrum of machine learning activities. This includes data collection, preparation, classification, model building, training, and application deployment.

As the importance of machine learning continues to grow in business operations and AI becomes more practical in enterprise settings, the competition among machine learning platforms will only intensify.

Continued research into deep learning and AI focuses on developing more general applications. AI models require extensive training to optimize their performance for a particular task. However, some researchers are exploring ways to make models more flexible by seeking techniques that allow a machine to apply context learned from one task to future, different tasks.

As the field of machine learning continues to expand, the competition among machine learning platforms will continue to intensify.

Vendors will strive to provide the most comprehensive and user-friendly platform services, including data collection, preparation, and deployment. Continued research into AI and deep learning will lead to the development of more general applications, which will make AI more practical for enterprise settings.